How to Spot and Prevent Deepfakes: Risks, Detection, and Global Solutions

- Updated April 25, 2025

- 3 Min Read

Introduction

A political leader playing Garba, or Morgan Freeman saying “I’m not Morgan Freeman”, or be it Tom Cruise on TikTok, all of this was definitely strange because humans could create something that never happened!

What Exactly Are Deepfakes?

Deepfakes are AI-generated content—video or audio—that fabricates events, making them seem real. They rely on advanced machine learning techniques, specifically an encoder-decoder system, to manipulate and reassemble faces and voices with striking accuracy.

According to The Indian Express:

"The algorithm identifies similarities between two faces and compresses them into shared features. Decoders trained on each face then reconstruct the manipulated image, swapping one face onto another. In essence, a compressed image of person X is run through a decoder trained on person Y, recreating X’s face with Y’s expressions."

Where Did Deepfakes Come From?

The term “deepfake” combines deep learning (a subset of machine learning that enables AI to perform tasks autonomously) and fake. It gained traction in 2017 when a Reddit user named ‘deepfakes’ used the technology to create explicit fake videos of celebrities.

While deepfake technology powers entertainment, gaming, and education, it has also raised alarming concerns.

Why Are Deepfakes a Problem?

Deepfakes are not just fun digital illusions; they pose serious risks:

- Political Manipulation: With elections approaching worldwide, deepfakes can be weaponised to distort speeches, alter events, and mislead voters.

- Threats to Women’s Safety: Like other online platforms, deepfake technology is increasingly used to harass and target women.

- Escalating Conflict: In a world already witnessing wars, deepfakes can be used to incite tensions, spread misinformation, and deepen divisions.

- Erosion of Trust: We used to believe in “seeing is believing.” Deepfakes challenge that notion, making it harder to discern reality from fabrication.

But can we outsmart deepfakes? Let’s explore how to detect them.

How to Identify Deepfakes

Watch this video:

With careful observation, you can often spot a deepfake. Here are 10 red flags to help you determine if a video is fake or real:

- Unnatural Eye Movements: AI struggles with replicating human eye motion, causing blinks to appear robotic or unnatural.

- Audio Mismatches: Words may not sync with mouth movements, and the tone or pitch might sound off or inconsistent.

- Awkward Body Movements: Limbs may appear too long or short, and gestures can look stiff or out of place.

- Facial Anomalies: Watch for inconsistencies in lip, neck, or face movement, especially when AI overlays manipulated content.

- Lighting & Skin Tone Issues: Deepfakes may have inconsistencies in lighting or skin tones, especially around jewelry or complex lighting conditions.

- Blurred or Warped Backgrounds: AI often neglects the background, leading to flickering, distortion, or inconsistency in surrounding objects.

- Stay Informed: Being aware of current events can help you spot discrepancies in deepfake content.

- Verify Before Sharing: Always check the source of content before sharing, especially if it seems suspicious.

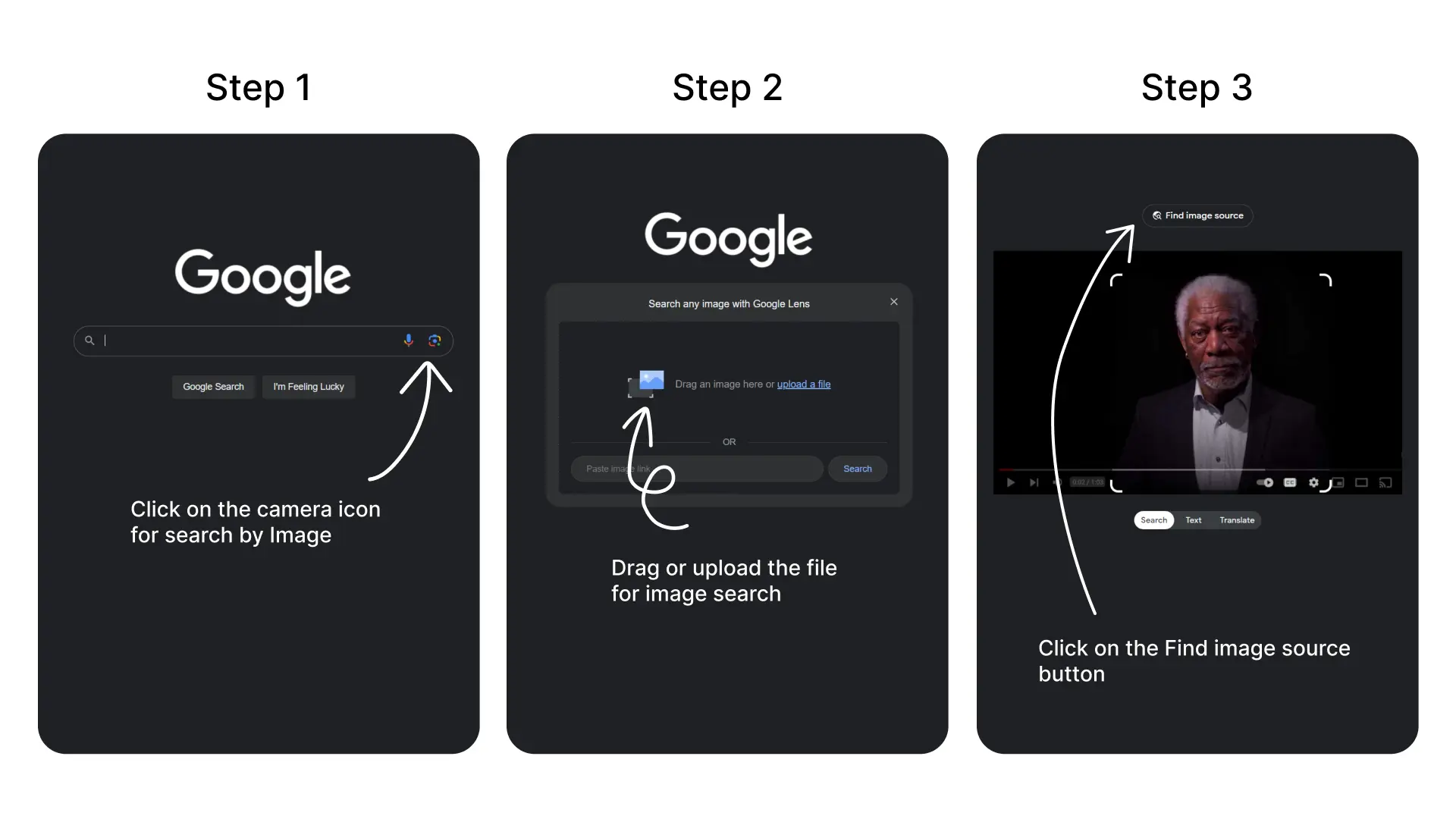

- Reverse Image Search: Use Google’s image search to trace the original source of a screenshot and verify its authenticity. You can do this in three simple steps as shown in the image below.

- AI Tools to Fight AI: Platforms like aivoicedetector.com can help identify fake voices by analyzing audio patterns.

What’s Being Done About Deepfakes?

Deepfake threats require more than individual vigilance. Governments and tech companies are stepping in.

Global Efforts

- AI Safety Summit (Bletchley Park, 2023): The U.S., U.K., China, Japan, India, and the EU, among others, signed a declaration recognizing AI’s risks in cybersecurity, biotechnology, and disinformation.

- U.S. Regulations: President Biden issued an executive order requiring AI companies to report new products to the federal government before release. A Deepfake Accountability Bill is pending in Congress.

- EU AI Act: Article 52(3) mandates transparency in deepfake advertisements and obligates platforms to implement deepfake detection mechanisms.

- India’s Approach: India is considering laws requiring WhatsApp to track deepfake origins under the IT Rules 2021 and may introduce broader regulations like the EU AI Act.

Tech Giants’ Response

- Google: Introduced AI-generated content detection tools using metadata and watermarking.

- Meta (Facebook): Launched the Deepfake Detection Challenge to improve identification techniques.

- Operation Minerva: Uses digital fingerprinting to detect deepfakes and revenge porn.

- YouTube’s New Guidelines: AI-generated videos must be disclosed, or creators risk suspension.

Our Role in Tackling Deepfakes

Technology alone won’t solve the deepfake problem. It’s up to us to stay alert, verify sources, and think critically before trusting online content. The fight against AI deception starts with awareness—because in the digital age, misinformation spreads faster than the truth.

Frequently Asked Questions

Stay in the Know

Get ahead with TechUp Labs' productivity tips & latest tech trend resources